AI Tinkerers Gdańsk Meetup – April 23rd

Join me as I break down Scaling RAG Hybrid Search: From Concept to 780K Pages

Piotr Chlebek · 2026-4-14

Piotr Chlebek · 2026-4-14

Abstract: Invitation to the AI Enthusiasts and Makers Meetup in Gdańsk.

Keywords: AI Tinkerers, Gdańsk, Meetup, RAG, LLM, Knowledge base

Happy to announce that I’ll be speaking at AI Tinkerers Gdańsk meetup on April 23rd!

I’ll be tackling the challenges of scaling RAG across massive datasets in my talk: Scaling RAG: Hybrid Search and Hierarchical Chunking for 780k Pages.

As always with AI Tinkerers, expect:

- A great atmosphere

- An amazing community eager to exchange experiences

- Hands-on technical demos from real builders

Spots are limited, so don't miss out! Register here.

Related Materials

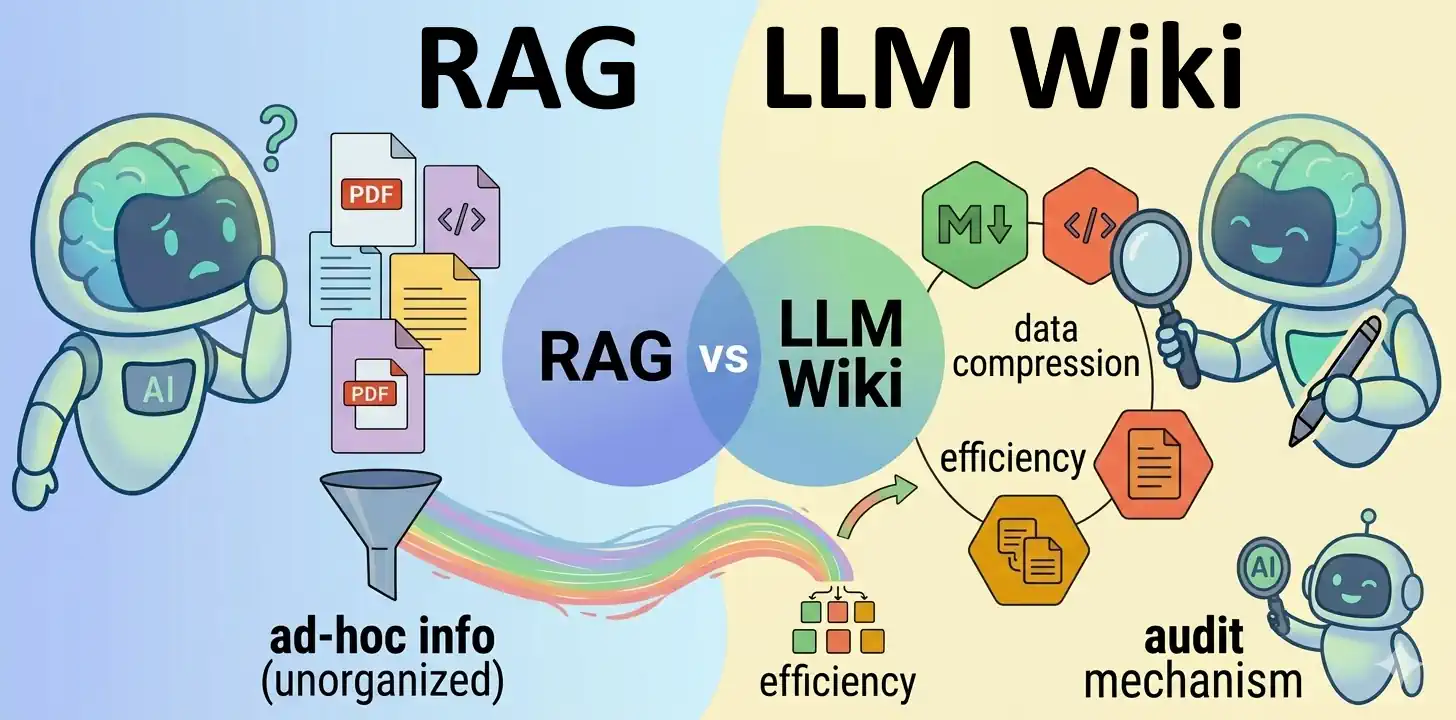

- LLM Wiki vs. RAG Knowledge Base

- Hybrid RAG with Semantic Chunking (work in progress)

- RAG isn't about AI; it's about engineering (work in progress)

- Serving the LLM locally with vLLM (work in progress)

Why RAG this time, instead of speech recognition?

For those who know me as a speech recognition expert: yes, RAG is a bit of a different ball game. The landscape of speech technology has changed — few companies nowadays need someone with deep, comprehensive expertise. More often, they are looking for mid-level engineers who can work with off-the-shelf components or ready-made solutions.

That is why I decided to branch out in other directions. RAG turns out to be challenging and complex enough, yet so important and useful, that I felt a strong pull to dive deep into it. Besides, it fits perfectly into my career path; I’ve always been drawn to hard problems, such as chess programming, aircraft detection, fuzzy search in large datasets, various ML / AI / data science systems, and speech recognition. I have to admit, I’ve had a fixation on search algorithms for years, and RAG fits that obsession perfectly.

Related Posts:

- Hybrid RAG with Semantic Chunking

- RAG isn't about AI; it's about engineering

- Serving the LLM locally with vLLM

- LLM Wiki vs. RAG Knowledge Base

References:

Images Source: Google DALL-E 3 (04.2026).