RAG isn't about AI; it's about engineering

Stop believing in LLM magic – it’s time for serious data engineering and the 20 modules your tutorial forgot

Piotr Chlebek · 2026-4-(work in progress)

Piotr Chlebek · 2026-4-(work in progress)

Abstract: This article critiques the oversimplified "happy path" of RAG tutorials, arguing that while a basic prototype takes minutes, a production-grade system requires months of rigorous engineering. The author moves beyond the naive "slice and search" approach to address the "hundred-headed monster" of real-world data and security, framing RAG not as AI magic, but as a complex, multi-layered infrastructure challenge that demands a professional, senior-level commitment to architectural depth.

Keywords: RAG engineering, Retrieval-Augmented Generation production, AI architecture, enterprise RAG, RAG implementation, LLM production deployment, semantic chunking, RAG data pipeline, vector database, RAG security, AI infrastructure, production-grade AI, RAG challenges, RAG modules.

(work in progress)

RAG is an engineering challenge

While browsing the AI & Machine Learning Community group,

I came across a  post [1] and a presentation

by

post [1] and a presentation

by  Hastika Cheddy that hit the nail on the head.

Her thesis on AI deployments was brutally honest:

Hastika Cheddy that hit the nail on the head.

Her thesis on AI deployments was brutally honest:

“I audited 10+ enterprise RAG deployments. Every single one collapsed in production. Not because of the LLM. Not because of the embedding model. Because of broken architecture.”

These words resonated with me instantly. Since the foundations fail more often than the models themselves, it’s time for me to add my two cents to the discussion on building effective RAG systems.

RAG: 5 minutes in a tutorial, 5 months in production

Analyzing commonly available examples of RAG systems, it’s hard to escape the impression that we are stuck in a phase of naive and rushed implementations. This technology, while it promises the world, is in reality extremely demanding and temperamental. The temptation to quickly fire up a simple sequence – “slice text into pieces, throw it into a vector database, search” – is so strong that key engineering foundations often take a back seat.

Aspects such as reliable data quality, process auditability, or resistance to attacks are given dangerously low priority. To make matters worse, fundamental design decisions are made on the fly. An example is the mass use of fixed-size chunking, which, when faced with real-world business documents, almost always loses to more sophisticated, semantic segmentation techniques.

Of course, there are more advanced RAG systems that have solid architectural foundations and work quite efficiently in production environments. However, they are not commonly discussed in tutorials – perhaps because they don't seem as appealing to those eager for quick results. They are associated with the long and meticulous building of a complex, multi-layered system.

In my view, it is precisely this complexity that is the core of the issue and the most interesting part of it. Therefore, I encourage you, the reader, to look at RAG as an engineering challenge. Here, we are not looking for "low-hanging fruit," but approaching the subject with senior-level seriousness – just as one approaches the design of any critical and complex system.

From three tiles on a diagram to a hundred-headed monster

Most diagrams we encounter online present RAG as an elegant and almost maintenance-free mechanism. The image is tempting: a document enters the machine, turns into vectors, passes through "three blocks total," and generates the ideal answer. Unfortunately, this vision of the “happy path” rarely survives its first collision with reality.

Places where these schematic simplifications mask the actual complexity of the process are plenty:

(TBD: list of examples goes here)

In the real world, your data is not sterile text files, but "dirty" PDFs with multi-level tables, footers, and non-obvious structures. On top of that, there are users who rarely ask questions in a predictable and orderly manner. For the system to stop hallucinating and start actually solving problems, we must abandon the illusion of simplicity in favor of solid engineering that secures the process at every stage.

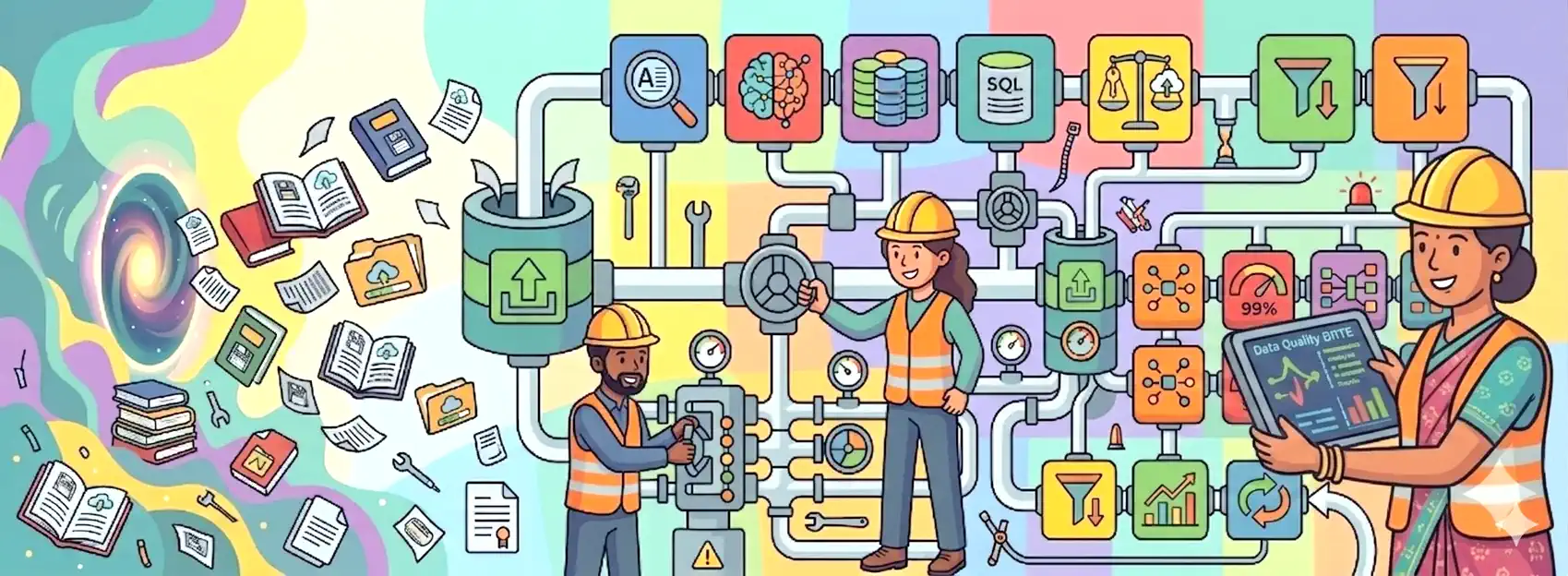

Why a production system needs 15+ modules, not just three?

The true anatomy of a professional RAG system does not boil down to just the three simple steps suggested by the acronym itself: Retrieve, Augment, and Generate. That is only the tip of the iceberg. If you are building a solution that is meant to be resilient to messy data, confused users, and even malicious manipulation attempts, you must look much deeper.

Below you will find a list of 20 components that should be considered – and in business conditions, usually simply implemented – for RAG to stop being just a technical curiosity and become a reliable enterprise-grade tool.

Only an architecture that takes these aspects into account allows for the creation of a system ready for crisis situations, audits, or potential legal disputes. It is worth remembering one principle: in RAG, the greatest engineering effort is not at all related to the choice of the language model itself, but to the construction of the “pipes” through which the data flows. It is precisely in this invisible-at-first-glance infrastructure that it is decided whether your system will be real support or a spectacular deployment failure.

RAG is not a ready-made "out of the box" product, but a continuous process. Success does not depend on buying a subscription to the most expensive LLMs, but on the painstaking work on information quality and flow. Cognitive dissonance will disappear only when we stop treating AI like magic and start seeing it as another complex element of modern software architecture.

...work in progress...

In this post:

- (work in progress)

Related Posts:

- AI Tinkerers Gdańsk Meetup – April 23rd

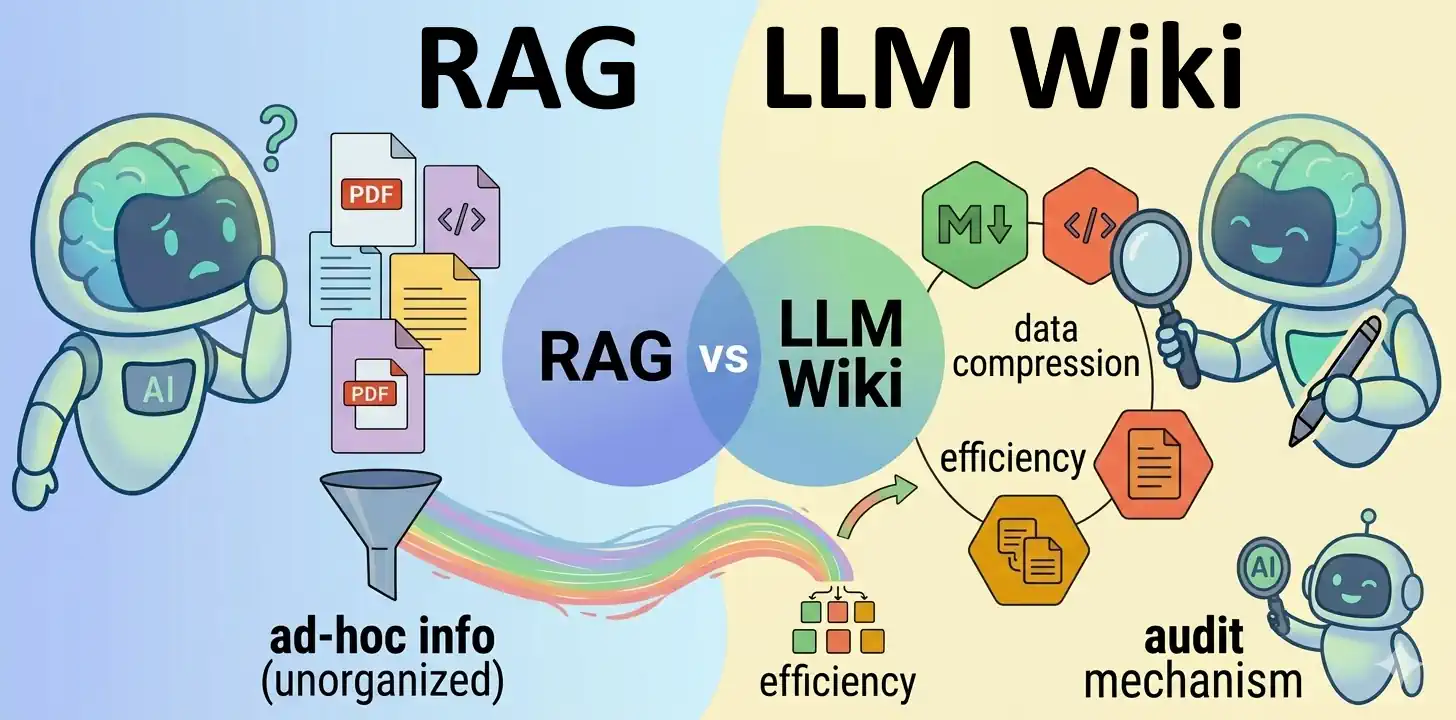

- LLM Wiki vs. RAG Knowledge Base

- Serving the LLM locally with vLLM

- Hybrid RAG with Semantic Chunking

References:

- [1]

RAG isn’t an AI problem. It’s an engineering problem - Hastika Cheddy

RAG isn’t an AI problem. It’s an engineering problem - Hastika Cheddy - [.] karpathy/llm-wiki.md - Andrej Karpathy

- [.] How to Build a Document Processing Pipeline for RAG with Nemotron - Chia-Chih Chen, Moon Chung, Nave Algarici and Sean Sodha

- [.] Seven Failure Points When Engineering a Retrieval Augmented Generation System - by Scott Barnett, Stefanus Kurniawan, Srikanth Thudumu, Zach Brannelly, Mohamed Abdelrazek

- [.] RAG Isn’t a Modeling Problem. It’s a Data Engineering Problem - by Alex Merced

- [.] Towards Requirements Engineering for RAG Systems - by Tor Sporsem, Rasmus Ulfsnes

- [.] "RAG is Dead, Context Engineering is King" — with Jeff Huber of Chroma - ~1h interview with Jeff Huber

- [.] 10 RAG Failure Modes at Scale (and How to Fix Them) - by Bhagya Rana

- [.] -

Images Source: Google DALL-E 3 (04.2026).

Key components of a reliable RAG architecture

Data Ingestion Layer

This covers everything that happens before a user even asks a question. This is where you "clean and organize" knowledge.

| Component | Mechanism | Why it matters |

|---|---|---|

| ETL: Data Quality and Integrity (cleaning, deduplication, normalization) | Data selection and "cleanup": removing noise, fixing OCR errors, deleting duplicates, and unifying formats. | Prevents the GIGO (Garbage In, Garbage Out) principle. Clean data reduces the risk of hallucinations and incorrect model responses. |

| ETL: Advanced Parsing and Chunking | Breaks documents into logical fragments (chunks) while preserving structure (tables, headers, metadata). | Allows the system to precisely extract specific information from the database while maintaining context, which is essential for accurate LLM responses. |

| Incremental Updates | Automatically refreshes the vector database with new or modified data without the need to re-index everything from scratch. | Guarantees access to up-to-date information (e.g., prices or regulations) and drastically reduces system maintenance costs. |

Query Orchestration Layer

This is the moment when the system analyzes what the human is actually asking.

| Component | Mechanism | Why it matters |

|---|---|---|

| Query Routing | The system decides which database, model, or API to consult (e.g., whether to look into technical documentation or HR procedures). | Not every question requires searching through the company's entire knowledge base. The router saves time and increases precision by directing the query to the correct data "bucket." |

| Query Transformation (Rewriting and Expansion) | Rewrites the user's query into a format better understood by the search engine. Decomposition for multiple queries. Hypothetical Document Embeddings (HyDE). | Users write messy queries. If they ask "And how much does it cost?", the system must know which product they were asking about two sentences earlier. |

| Memory Management | Storing and intelligently summarizing previous parts of the conversation. | If a user asks 10 questions in a row, sending the entire history to the model would consume a lot of space (tokens). You need a module that "remembers" only the most important threads. |

| Metadata Management and Filtering (Self-Querying) | Allows for hard filtering of results by date, department, or document type before semantic search. | If a client asks for "2024 reports," you don't want the model suggesting data from 2019 just because they are "semantically similar." |

Retrieval & Ranking Layer

The heart of RAG is finding a needle in a haystack. This group is responsible for the quality of the retrieved documents.

| Component | Mechanism | Why it matters |

|---|---|---|

| Hybrid Search | Combines vector search (semantic), keyword search (BM25), and graph search (relationship-based). Sometimes includes multimodal search. | Vectors capture intent but struggle with specific identifiers like serial numbers. Adding graph search allows the system to traverse entity relationships and hierarchies, uncovering deep context that keyword or vector proximity might miss. |

| Re-ranking | Recalculates the relevance of the top results returned by the search engine using a more precise model. | Vector search is fast but often "noisy." A re-ranker ensures that the most truly relevant fragments make it to the very top. |

| Deduplication and Result Selection (Post-processing) | Discarding redundant, nearly identical fragments in favor of unique content (e.g., using the MMR algorithm). | Ensures a diversity of information in the context window. Instead of repeating the same thing, the model receives a broader spectrum of data, which improves answer quality. |

Generation & Trust Layer

This is where the LLM creates the response, and the system ensures it is safe and accurate.

| Component | Mechanism | Why it matters |

|---|---|---|

| Prompt Engineering and Context Compression | Optimizes how data is fed to the LLM by selecting only the most relevant fragments to avoid exceeding the context window and to save tokens. | Including too much text (the "Lost in the Middle" phenomenon) causes the model to get confused and ignore key facts. |

| Guardrails – Security and Reliability | Acts as an intelligent filter for queries and responses. It blocks profanity, data phishing attempts, and verifies if the answer is actually based on your documents (grounding). | Protects against PR disasters and hallucinations. It ensures the bot doesn't reveal company secrets, isn't vulgar, and doesn't invent facts that aren't in the database. |

| Citations & Attribution | The model must provide the specific source (e.g., a link to a PDF file or a page number) from which it drew the information. | This is the absolute foundation of trust. Users must be able to quickly verify that the model isn't hallucinating. Without source links, RAG loses its greatest advantage. |

| Confidence / Uncertainty Scoring | An assessment of how much the system "trusts" the generated response. | Allows the system to show the user the level of certainty and decide whether to return the answer at all. |

| Fallback Scenarios and Error Handling | Handles situations such as: no results found, low confidence, or timeouts. | A system without fallbacks will eventually provide a nonsensical answer instead of saying "I don't know." |

Operations & Governance Layer

This is what makes the system affordable, fast, and ensures we know why it sometimes fails.

| Component | Mechanism | Why it matters |

|---|---|---|

| Access Control (Permissions and ACLs) | Ensures the user only sees what they are authorized to access. | Without this, RAG could leak HR data, financial records, or confidential documents. |

| Caching Layer (Semantic Cache) | Remembers answers to similar questions and returns them without re-querying the LLM. | Scaling is expensive. Caching reduces latency and API costs by as much as 30-50%. |

| Evaluation Module (RAGAS/TruLens) | Automated tests measuring Faithfulness and Context Relevancy. | Without metrics, you don't know if changing a model or chunk size actually improves the system or breaks it. A "vibe check" is not a strategy. |

| Observability & Tracing | A full log of every step + metrics: what was retrieved, what went into the prompt, and how the model responded. | When a user reports an error, you need to see exactly which module failed: whether the retrieval failed or the LLM failed. |

| Feedback Loop (Human-in-the-loop) | Supports collecting feedback from users to improve the system. | Even the best evaluation cannot replace the opinion of a real human. This data allows you to later fine-tune the system or correct erroneous fragments in the knowledge base. |